New feature of Bloomy's EFT Module for TestStand improves data recovery in the event of a crash

New feature of Bloomy's EFT Module for TestStand improves data recovery in the event of a crash

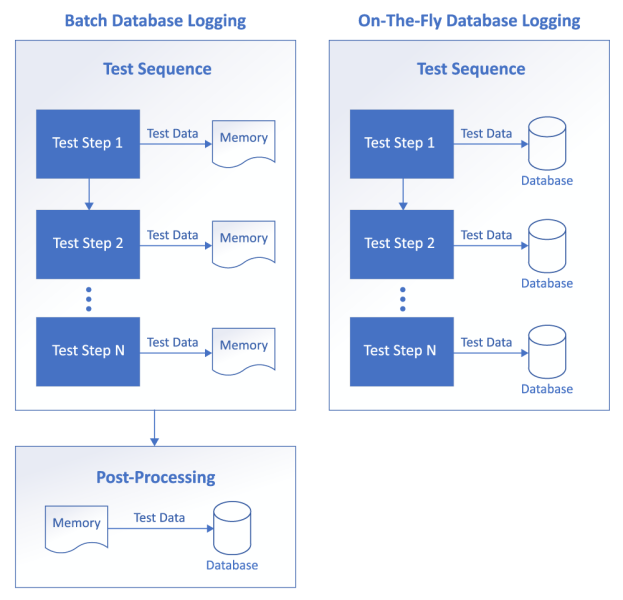

Bloomy recently added a new feature to the database logging function of the 2019 EFT Module for TestStand, versions 2019 and later. This new feature allows real time, also known as on-the-fly (OTF), data logging to a database. Now you have two options of how you can log your test data to a database: 1) traditional batch data logging, or 2) OTF data logging.

Before we take a look at which data logging option is best for your use case, let’s first get a better understanding of how each of these data logging features operate.

Understanding the two database logging options

In the traditional batch data logging mode, the test result data is pushed to the database when all of the testing is completed. That is, in TestStand, when the test sequence has completed executing all of its test steps, all of the test data (aka a “batch” of data) is pushed to the database at one time. Depending on the data captured during the entire test sequence, this can be a significant amount of data. We’ll talk more about this in a minute.

OTF datalogging pushes smaller amounts of data to the database much more frequently, or “on-the-fly”. For example, during the execution of a TestStand test sequence, after every test step completes execution, the test data from that test step can be pushed to the database. In this mode, smaller sets of test data are pushed to the database many times during a test cycle.

Below is a diagram depicting the execution flow of batch and OTF database logging when executing a TestStand test sequence. The intent of this diagram is to provide you with a visual reference after reading the previous two paragraphs describing batch and OTF database logging.

When to use batch vs. OTF database logging

Okay, now that we have a fundamental understanding of each database logging feature, let’s compare the pros and cons of each approach.

| Batch | On-The-Fly | |

| Pros |

|

|

| Cons |

|

|

| Recommendation |

|

|

Taking a closer look at these pros and cons…

While the batch datalogging does offer the advantage of optimizing test execution speed, this does come with a caveat: If your test is capturing large amounts of data and/or running for extended periods of time, then this data will start to consume memory which is unbounded. This can affect the performance of the PC, which can actually slow down test execution over time. On the con side for batch datalogging, again, if your tests are relatively long, not only are you consuming more memory, but there is the potential to lose all of that data if a catastrophic event were to occur during test execution. Examples of catastrophic events are:

- Test station PC crashes due to low or no memory.

- A sudden Windows update that requires the PC to reboot.

- Test instrument hangs indefinitely during test execution.

OTF mitigates the cons of the batch datalogging by writing smaller amounts of data to the database at more frequent intervals. This minimizes memory usage and helps to mitigate the potential loss of data due to a catastrophic event. The con of OTF is that there will be some overhead added to the test execution time as the data is pushed to the database during the test cycle (such as at the end of every test step). Also, be aware that if the data rate capture is faster than the configured OTF processing strategy, there may be a stack-up of temporary data files, that should resolve itself in most practical situations.

Okay, so we are finally to the point where we can make some decisions on when to use either of these datalogging features. As pointed out in the pros and cons, for any testing that is executed over a long period of time, such as hours or days long environmental stress screening (ESS) testing, it is best to use the OTF datalogging option. For short duration test cycles where test optimization is critical as is often the case with functional test, utilizing the batch datalogging feature is probably the wise choice.

In summary, the key parameters you need to keep in mind are:

1) Is test time optimization important?, 2) How much test data am I collecting?, and 3) Is the test cycle long where the potential loss of data, and increased memory usage, is important to mitigate? Based on what is most important to your test requirements, and referring to the pros and cons table, the obvious choice as to which datalogging feature you choose should become apparent.

Related links

- jkostinden's blog

- Log in or register to post comments